Meta To Reduce Unwanted Messages To Teens On Facebook, Instagram

Protecting teens. Social media giant Meta to impose stricter message settings for teenagers on Facebook and Instagram

Meta Platforms has announced further measures to protect children, as it responds to ongoing concerns about online protection for under-age users.

In a blog post on Thursday, Meta said it would be introducing stricter message settings for teenagers on Instagram and Facebook.

It comes after Meta earlier this month announced it would “begin hiding more types of content for teens on Instagram and Facebook, in line with expert guidance.” Posts about suicide and eating disorders were included in these new protections.

![]()

Stricter DM rules

Meta also said earlier this month it was automatically placing all teenagers into the most restrictive content control settings on Instagram and Facebook, and was also restricting additional terms in Search on Instagram, and including new nudges to encourage teens to close Instagram at night.

Now Meta is building more safeguards to protect teen users from unwanted direct messages on Instagram and Facebook.

“We want teens to have safe, age-appropriate experiences on our apps,” Meta wrote. “We’ve developed more than 30 tools and features to help support teens and their parents, and we’ve spent over a decade developing policies and technology to address content and behaviour that breaks our rules.”

“To help protect teens from unwanted contact on Instagram, we restrict adults over the age of 19 from messaging teens who don’t follow them, and we limit the type and number of direct messages (DMs) people can send to someone who doesn’t follow them to one text-only message,” Meta wrote.

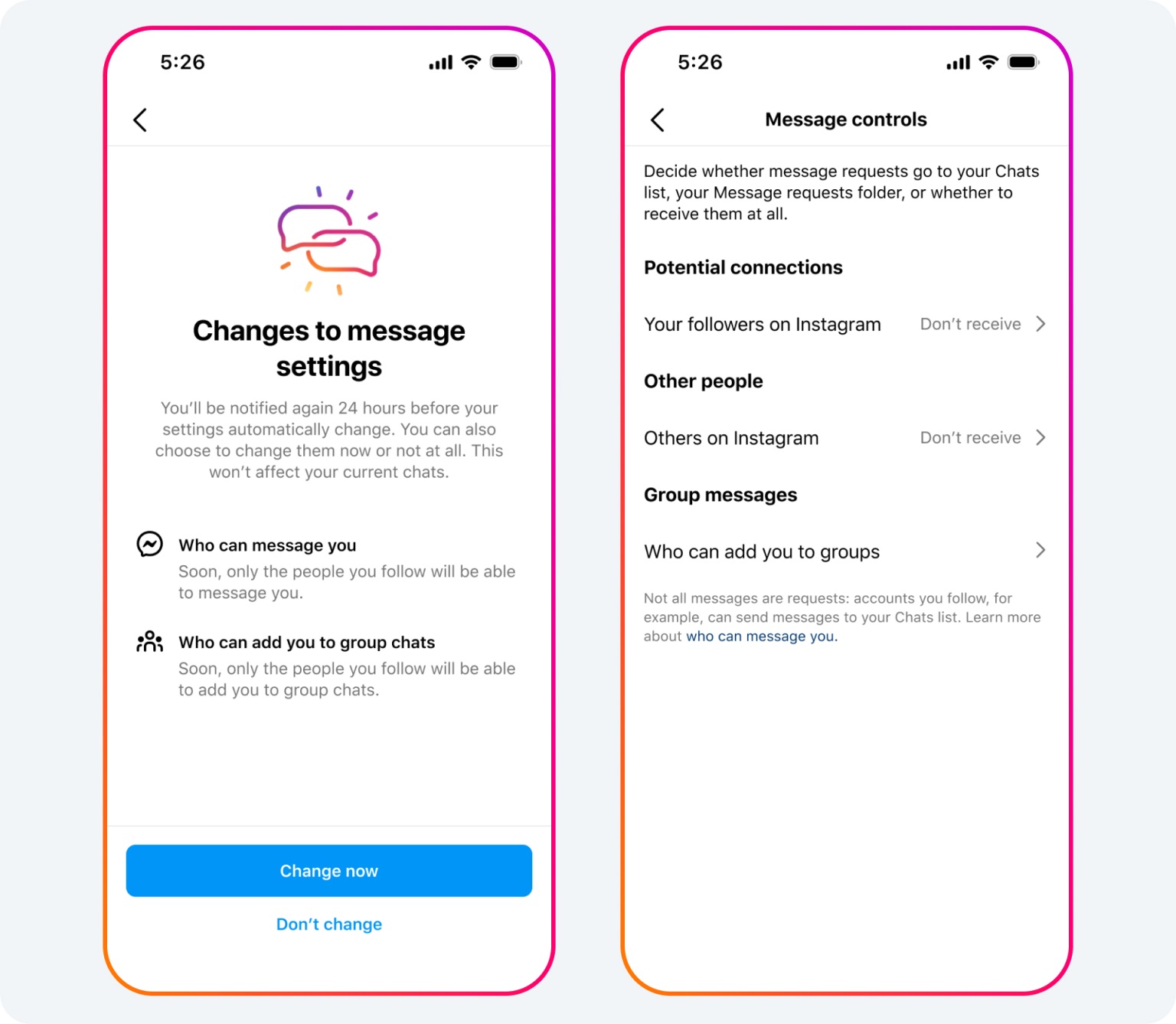

“Today we’re announcing an additional step to help protect teens from unwanted contact by turning off their ability to receive DMs from anyone they don’t follow or aren’t connected to on Instagram – including other teens – by default,” it added.

Under this new default setting, teenagers can only be messaged or added to group chats by people they already follow or are connected to. Teens in supervised accounts will need to get their parent’s permission to change this setting.

This default setting will apply to all teens under the age of 16 (or under 18 in certain countries).

Image credit Meta Platforms

Meta is also making these changes to teens’ default settings on Messenger, where under 16s (or under 18 in certain countries) will only receive messages from Facebook friends, or people they’re connected to through phone contacts, for example.

Protecting children

In addition, Meta is planning to launch a new feature later this year that is designed to help protect teens from seeing unwanted and potentially inappropriate images in their messages from people they’re already connected to, and to discourage them from sending these types of images themselves.

The issue of protecting children and teenagers from social media has dogged Meta for years now.

In October last year, 33 US states filed legal action against Meta, alleging its Instagram and Facebook platforms were harming children’s mental health.

The US states alleged Meta contributes to the youth mental health crisis by knowingly and deliberately designing features on Instagram and Facebook that addict children to its platforms.

That federal suit was reportedly the result of an investigation led by a bipartisan coalition of attorneys general from California, Florida, Kentucky, Massachusetts, Nebraska, New Jersey, Tennessee, and Vermont.

Prior to that in October 2021, the head of Instagram confirmed the platform was ‘pausing’ the development of the “Instagram Kids” app, after the Wall Street Journal (WSJ) had reported on leaked internal research which suggested that Instagram had a harmful effect on teenagers, particularly teen girls.

Facebook had previously said it would require Instagram users to share their date of birth, in an effort to improve child safety.

Following the first reports, a consortium of news organisations published their own findings based on leaked documents from whistleblower Frances Haugen, who testified before Congress and a British parliamentary committee about what she found.