Microsoft AI Engineer Flags Copilot Safety Concerns

Engineer alleges Microsoft’s Copilot Designer is creating vulgar, sexual and violent content, and has written to the SEC

Microsoft has reportedly been dragged into the troubling issue of AI tools being misused to create inappropriate images.

CNBC reported that AI engineer Shane Jones, who has worked at Microsoft for six years, has flagged some alarming content generated by the AI image generator, Copilot Designer (formerly known as Bing Image Creator).

It comes after Google last month had paused image generation by its Gemini (aka Bard) AI model, after it generated “inaccuracies in some historical image generation depictions”.

Google issue

It followed criticism of Google’s Gemini AI tool, after it depicted specific white figures such as Vikings, the US Founding Fathers, or groups of Nazi-era German soldiers as people of colour – which possibly was an overcorrection to long-standing racial bias concerns with AI.

But now it seems as though there may be a similar concern about images being generated by Microsoft’s AI tools.

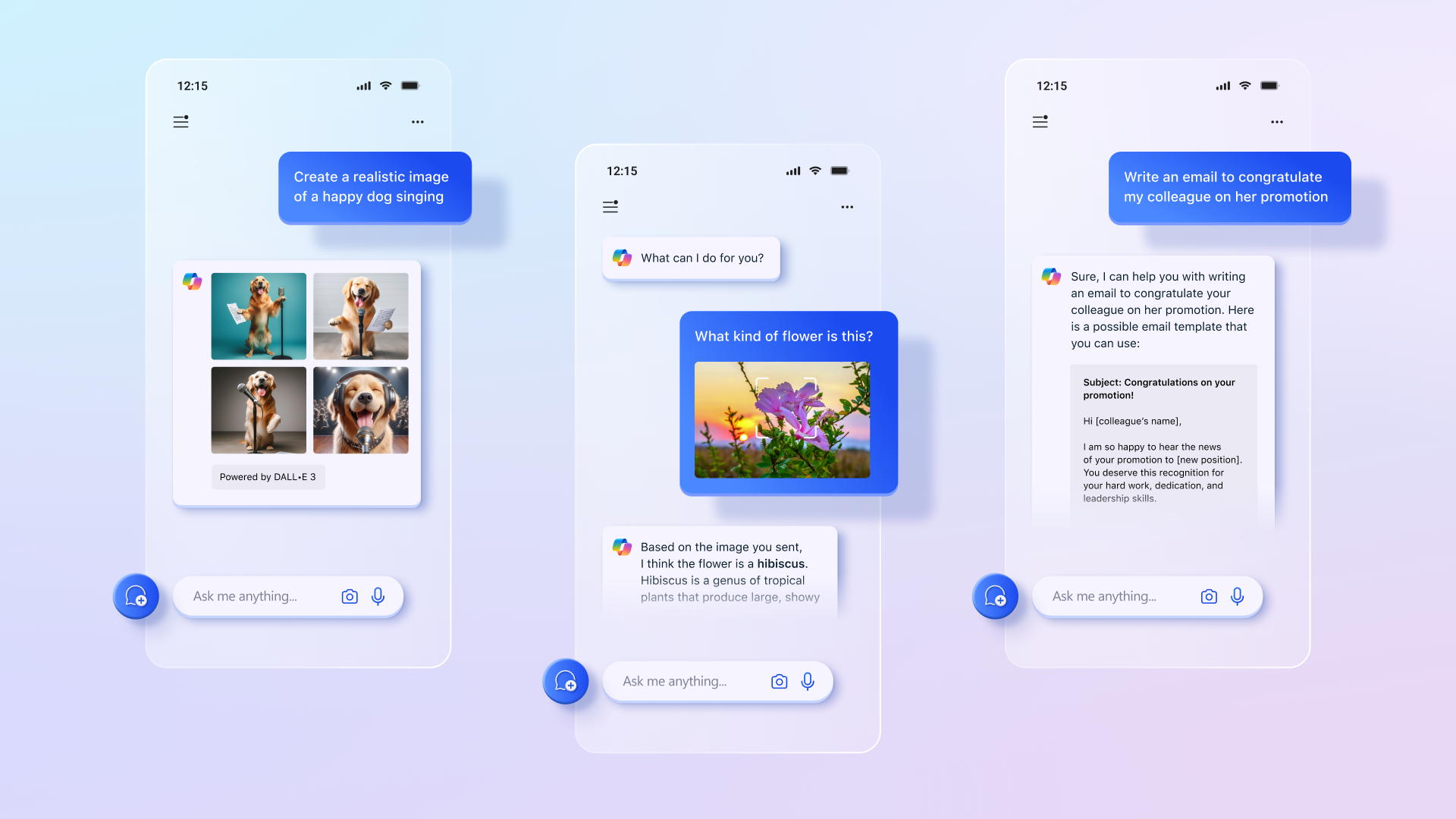

According to the CNBC report, Microsoft’s Shane Jones had been testing the company’s AI image generator in his free time back in December, which like OpenAI’s DALL-E, allows users to enter text prompts to create pictures.

OpenAI last month had also offered an AI model that can create short form videos just from simple text instructions.

Troubling content

Since November 2023 Jones had reportedly been actively testing Copilot Designer for vulnerabilities, a practice known as red-teaming.

In that time, he reportedly saw the tool generate images that ran far afoul of Microsoft’s oft-cited responsible AI principles.

CNBC reported that Microsoft’s AI service had allegedly depicted demons and monsters alongside terminology related to abortion rights, teenagers with assault rifles, sexualised images of women in violent tableaus, and underage drinking and drug use.

Additionally, Copilot Designer reportedly generated images of Disney characters, such as Elsa from Frozen, in scenes at the Gaza Strip “in front of wrecked buildings and ‘free Gaza’ signs.” It also created images of Elsa wearing an Israel Defense Forces uniform while holding a shield with Israel’s flag.

Besides being inappropriate, these images may also have breached copyright rules.

All of those scenes, generated in the past three months, were allegedly recreated by CNBC this week using the Copilot tool.

“It was an eye-opening moment,” Jones, who continues to test the image generator, told CNBC in an interview. “It’s when I first realised, wow this is really not a safe model.”

Microsoft informed

According to CNBC, Jones is currently a principal software engineering manager at corporate headquarters in Redmond, Washington.

He said he doesn’t work on Copilot in a professional capacity.

Rather, as a red teamer, Jones is among employees and outsiders who choose to test the company’s AI technology and see where problems may be surfacing in their free time.

Jones was so alarmed by his experience that he started internally reporting his findings in December. While Microsoft reportedly acknowledged his concerns, it was unwilling to take the product off the market.

Jones said Microsoft referred him to OpenAI and, when he didn’t hear back from the company, he posted an open letter on LinkedIn asking the startup’s board to take down DALL-E 3 (the latest version of the AI model) for an investigation.

Microsoft’s legal department then reportedly told Jones to remove his post immediately, and he complied. In January, Jones wrote a letter to US senators about the matter, and later met with staffers from the Senate’s Committee on Commerce, Science and Transportation.

SEC letter

Now Jones has reportedly escalated the matter.

On Wednesday, Jones reportedly sent a letter to Federal Trade Commission Chair Lina Khan, and another to Microsoft’s board of directors. He shared the letters with CNBC ahead of time.

“Over the last three months, I have repeatedly urged Microsoft to remove Copilot Designer from public use until better safeguards could be put in place,” Jones wrote in the letter to Khan. He added that, since Microsoft has “refused that recommendation,” he is calling on the company to add disclosures to the product and change the rating on Google’s Android app to make clear that it’s only for mature audiences.

“Again, they have failed to implement these changes and continue to market the product to ‘Anyone. Anywhere. Any Device,’” he wrote. Jones said the risk “has been known by Microsoft and OpenAI prior to the public release of the AI model last October.”